OpenAI rolls out ChatGPT Trusted Contact for adults

Fri, 8th May 2026 (Yesterday)

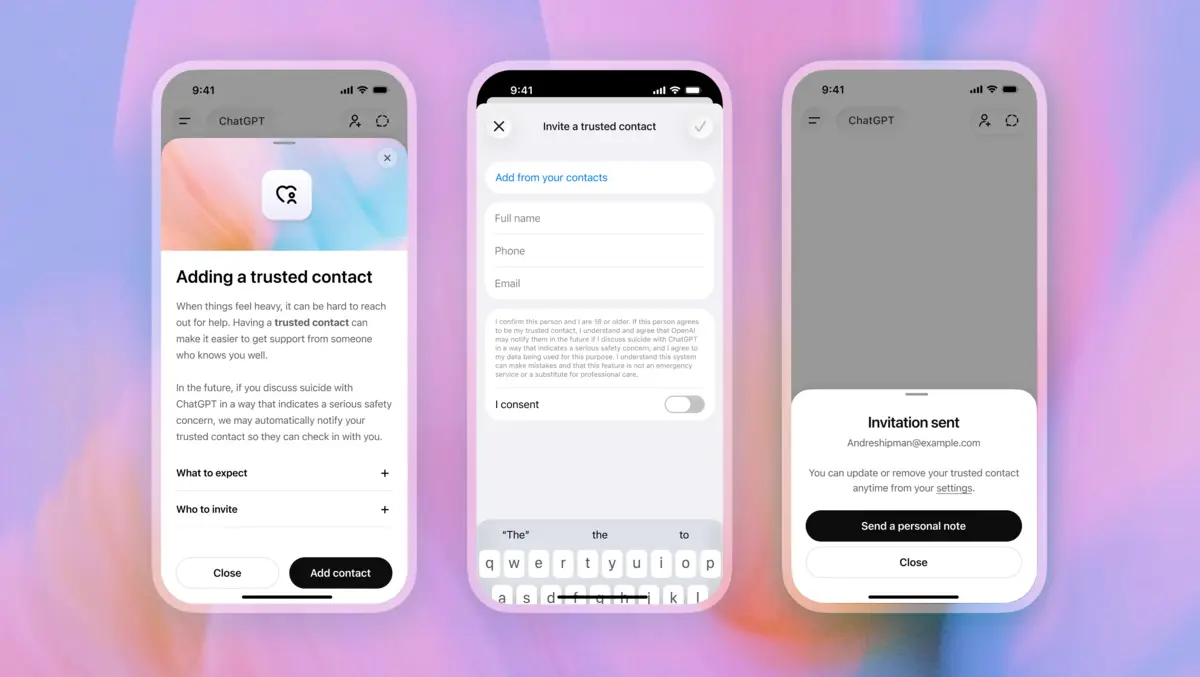

OpenAI has begun rolling out an optional Trusted Contact feature in ChatGPT for adult users. It lets people nominate one person who may be alerted in serious self-harm situations.

The tool expands an existing safety notification system previously limited to linked teen accounts through parental controls. Adults aged 18 and over can now add a trusted person through ChatGPT settings, with the minimum age set at 19 in South Korea.

Users can nominate one adult, such as a friend, family member or caregiver. That person must accept an invitation within a week before the arrangement becomes active. If the invitation is declined, the user can choose someone else.

If ChatGPT's monitoring systems detect that a user may be discussing self-harm in a way that suggests a serious safety concern, the service tells the user it may notify the nominated contact. It also prompts the user to consider reaching out directly, including suggested ways to start that conversation.

A trained review team then assesses the case before any alert is sent. Every notification is subject to human review, which OpenAI aims to complete in under one hour.

The message sent to a Trusted Contact is limited in scope. It says self-harm arose in a potentially concerning way and encourages the recipient to check in, but does not include chat transcripts or other conversation details.

Alerts may be delivered by email, text message or in-app notification for those with a ChatGPT account. Users can edit or remove their nominated contact at any time, and the Trusted Contact can also remove themselves.

Safety layers

The new tool sits alongside other measures in ChatGPT for sensitive conversations. These include prompts to contact emergency services, crisis helplines, mental health professionals or trusted people, as well as refusals to provide instructions for suicide or self-harm.

OpenAI has also worked to improve how ChatGPT identifies signs of distress, responds to different levels of risk and, in some cases, suggests breaks after prolonged use. More than 170 mental health experts have informed that work.

Trusted Contact was developed with input from clinicians, researchers and organisations specialising in mental health and suicide prevention. The effort drew on OpenAI's Global Physicians Network, which it said includes more than 260 licensed physicians across 60 countries, and its Expert Council on Well-Being and AI.

OpenAI also worked with external groups including the American Psychological Association. It presents the product as an added support mechanism, not a substitute for crisis services or professional care.

Expert commentary in the announcement focused on the role of personal connection in moments of distress. "Psychological science consistently shows that social connection is a powerful protective factor, especially during periods of emotional distress. Helping people identify a trusted person in advance, while preserving their choice and autonomy, can make it easier to reach out to real-world support when it matters most," said Dr. Arthur Evans, Chief Executive Officer, American Psychological Association.

The design reflects a broader debate over how AI products should respond when users disclose acute mental health struggles. Companies running conversational systems have faced scrutiny over whether those products can reliably identify crisis situations, protect user privacy and direct people to real-world help without overreaching.

OpenAI acknowledged those limits, saying serious safety situations are rare and notifications may not always reflect exactly what someone is experiencing. The goal, it said, is to provide another route to human support while keeping conversation content private.

The service notifies users before an alert may be sent rather than operating without their knowledge, and the trusted person receives only a general warning. That approach appears intended to balance intervention with user autonomy and privacy.

Human oversight

Automated systems and trained reviewers must both be involved before a Trusted Contact is notified. That combination of machine detection and human judgment is becoming a common pattern in safety systems for generative AI, particularly in highly sensitive cases where companies want to reduce false alarms.

Outside experts involved in the initiative linked the feature to a broader ambition for AI systems to support human relationships rather than replace them. "One of AI's biggest promises is how it can foster authentic human-to-human connection and psychological safety. I am encouraged by ChatGPT's Trusted Contact feature, which offers a step forward to human empowerment, especially during moments of vulnerability," said Dr. Munmun De Choudhury, J. Z. Liang Professor of Interactive Computing at Georgia Tech and member of the Expert Council on Well-Being and AI.

ChatGPT will continue to direct users to local crisis resources and emergency services where appropriate, while refusing requests for information that could facilitate suicide or self-harm.

Trusted Contact marks another step in how major AI developers are adding intervention tools to consumer chatbots as those products become part of users' daily lives and, at times, their most sensitive conversations.